“the theory and development of computer systems able to perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.”

It seems simple enough to program a computer to make decisions based upon values and formulas that it receives from human entry or data available. To respond and react to input with a set of available responses using voice technology or sound waves is also realistic, as well as language translation since we have language translation technologies available. Since the creation of facial recognition, we are now able to match facial movements to speech patterns and emotional responses, as well as record what is good, appropriate, accurate, timely. Clearly it would be a daunting task to program a computer to respond to any and every possible scenario since options seem to be unlimited in human communication, but do we have to? My camera on my laptop stares me in the face all day long. My PC knows what I’m typing, it should know what is pleasing to me or frustrating, but do we require cameras and bio-rhythm tracking to create an interactive, responsive, emotionally sound computer?

With Machine Learning, we are able to teach the system what is the correct most accurate, most appropriate response. Machines can adapt, store, and correct responses, but can the machine learn on its own, without specific scenario or situational programming? Does it have to be taught an answer to every question or can it be something that can learn to evaluate a human conversation and determine the best outcome based on emotional facial responses, such as a smile, a stare, a grimace, an angry move or stance? We have recorded enough drama, sitcom, and real life situations and storylines that we certainly could program a computer to evaluate each action, line, frame by frame, and store the information for later use in mixing up storylines, reactions, and movements. I can only imagine programming a set of emotional responses and offering an input control or data retrieval mechanism for a system to evaluate and provide a verbal, visual, and textual response to. If a program can evaluate a sentence and find the correct human emotion, then it could understand the state of a conversation, human satisfaction, and if the right data is available, could guide the conversation based upon such responses.

AI In Business

We also have recorded enough customer service and sales calls for “Training and Quality Control” to program a computer to repeat what seems to work and what can be found acceptable when communicating with humans. By creating a knowledge path based on a set of known problems or questions, a computer can replicate a human in problem solving and selling. The challenge is to make it sound like a human, make the human believe it’s a human, and for the system to be able to handle all possible outcomes. It’s truly as a simple as a database of words, questions, phrases, and answers with responsive sounds that uses human tones, emotions, and language, but it requires accuracy checking, matching, and good programming to determine relevancy.

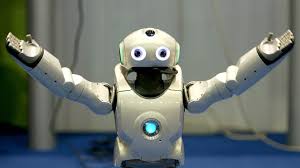

How to make a robot or Artificially Intelligent Machine look, sound, and act the most human has often been a challenging, perplexing idea. The Humanoid is programmable to play, respond to human input, move, and dance, but it lacks human gushy eyes that water when its feelings are hurt, or bones that break when it falls from a roof, or a heart that stops because its arteries are clogged.

So, yes, robots with Artificial Intelligence with Machine Learning is possible, but to what extent? Can we use existing video/storylines as a database to program a computer to evaluate and mimick such recorded actions? I believe it’s possible and it doesn’t scare me anymore.

If we can program a computer to respond and react to human input such as close this program or type this, move this, or control that based upon a few keywords, such as the Google Search Algorithm, then I’m certain we can teach a robot to move left (if or when certain conditions exist) and say this or that when other conditions or criteria have been met. We can use the same programming methods in business systems for problem solving, decision making, sales, analytics, and other tasks that can be automated with minimal human intervention.

Its actually funny to me sometimes when I call tech support or use an online chat because they are so much like a responsive computer program and rightfully so. Much like Siri, they seem to lack the ability to solve a problem with personality on the first attempt. I actually sometimes hear a pause after sharing some information verbally, almost as if the person (or robot) is trying to gather and process the information, searching for the best response to the question. I am even sometimes alarmed if they are using my mental unshared information to form the response. In some cases this has rang true, indicating they may have some access to brainwaves or pre-determined/possible outcomes I have considered non-verbally while on the line. Dangerous and a violation of personal privacy indeed, but what an advancement in technology if this is the case.

Of course speed of collection, evaluation, decision, and response is of major importance, as well as quality, accuracy, and timing. Public opinion and acceptance is important, but AI seems to be well on its way, with or without.

Hi you have a fine website It was very easy to post easy to understand